It’s been an absolutely crazy couple of weeks at work so I haven’t had much time to work on my personal projects. I have been doing bits and pieces though. I finished a set of updates to Mountaineer (I have two more features to add on my roadmap), started work on bringing GEOM to a point where I can work on it again and distribute it, and have begun work on a new project of sorts.

Mountaineer 1.2

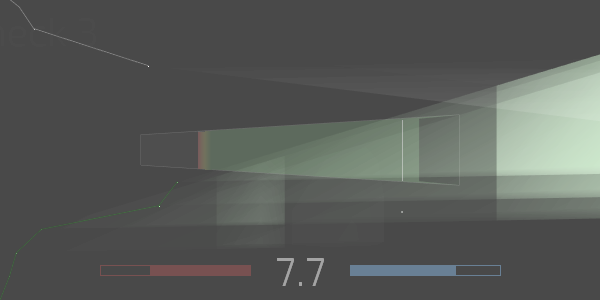

I had an idea for Mountaineer a little while ago (maybe around early October) – real-time display of its predictions on the graph while the joint is actually being smoked. What I wanted to achieve was to visualise an ‘envelope’ of predictions built from minimum, maximum and average expected values. The idea being that there is an extremely high chance that, for example, the total number of drags for the joint will fall within these values (tending towards the average value).

As it turned out, this required a pretty significant overhaul of the prediction system – it used to be pretty hacked-together in that there were a set of global variables representing the current linear regression. Now I have a Regression object which is nice and modular – I generate one every time an event happens to change the regression. I query the ‘current’ regression whenever I need information that I used to use the global variables for, but I can also query past regressions to build a visualisation of predictions-over-time.

This lets me see how the earlier predictions in the session compare to what actually happened, and help to see the overall trend in an intuitive way.

I have two other things on my roadmap for Mountaineer.

The first is to set up multiple display options for the joint itself. I have some sketches for a hyper-minimal version, but once the system is setup, it will be easy for me to add other display modes.

The second change is much, much more significant: I want to set the software up so that it can read files that have incomplete data. Right now, if I were to smoke a joint and only record the volume over time (and not bother with all the drag, ash, light, etc recording), it would completely throw off my drag calculations and potentially even crash the analysis suite when I loaded it up. Similarly, if I only wanted to record the joint shape, and then not record a session, that would also be a problem. I know how I’m going to go about setting up this behaviour, but it’ll definitely take me a solid day, so I’m putting it off until I have the spare time.

GEOM Reimplementation

I opened up my old GEOM project with the intention of sprucing it up to the point that it would be distributable. The main task was to update the Oculus SDK from 0.4 to 0.8. Once I did that, however, I was getting a strange bug where the framerate was totally unplayable (often less than 20). Opening up the Unity Profiler to check out what was going on, I was a little alarmed to see more than 95% of the CPU time was being taken up by something Unity referred to only as ‘Overhead’.

After a ton of troubleshooting, I wound up being unable to work out what was causing the problem.

Because the rest of the GEOM codebase is generally terrible (it was one of my first major projects), I decided the best option was to throw the whole lot out and start again from scratch. So I’ve been working on that for the past few weeks.

Very frustrating, however, has been the discovery that the mesh-generation method I was using in the original GEOM seems to be genuinely the best option outside of perhaps geometry shaders (which I haven’t played with yet). All the more robust and efficient mesh generation setups I’ve been using don’t play nicely with Unity for various reasons.

My next move is to give Geometry Shaders a shot (something I’m not hopeful about because of the specific behaviours I want to achieve), and then to try reimplementing the original mesh generation technique in a more efficient and generally useful way.

Whispers of a New Project

I play a lot of piano in my spare time – I don’t really play pieces very often (with a few exceptions): most of my time is spent improvising. I spend about as much time exploring the structure underlying music as I do actually playing music. A little while ago I found the most interesting structure I’ve seen yet. However, it’s a little bit too intricate and numerical for me to do by hand, so I’ve realised I’m going to need to move into software to keep exploring it. I’ve done some initial experiments which are looking extremely promising, so when I get some time I’m going to spend a solid day creating the initial version of a new project, which will probably wind up being an algorithmic composer of some form. Stay tuned!

That’s all from me for now. See you all later!